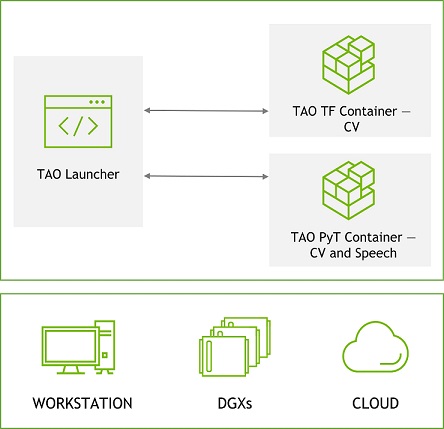

Cannot train Tao Toolkit UNet model in version v4.0.0 and v4.0.1

Excuse me @Bin_Zhao_NV @Morganh I’ve changed gpus from Tesla P100 to Tesla V100 and tried to train Tao Toolkit UNet model with 4 gpus in version v4.0.0 and v4.0.1 again. However. I still got the error message: device CUDA:0 not supported by XLA service while setting up XLA_GPU_JIT device number 0. This is the result in the process of training UNet when I ran the command nvidia-smi. Is this a bug for Tao Toolkit v4.0.0 and v4.0.1 ? When I trained UNet in the version v3.22.05, it seemed tha

Blogs Dell Technologies Info Hub

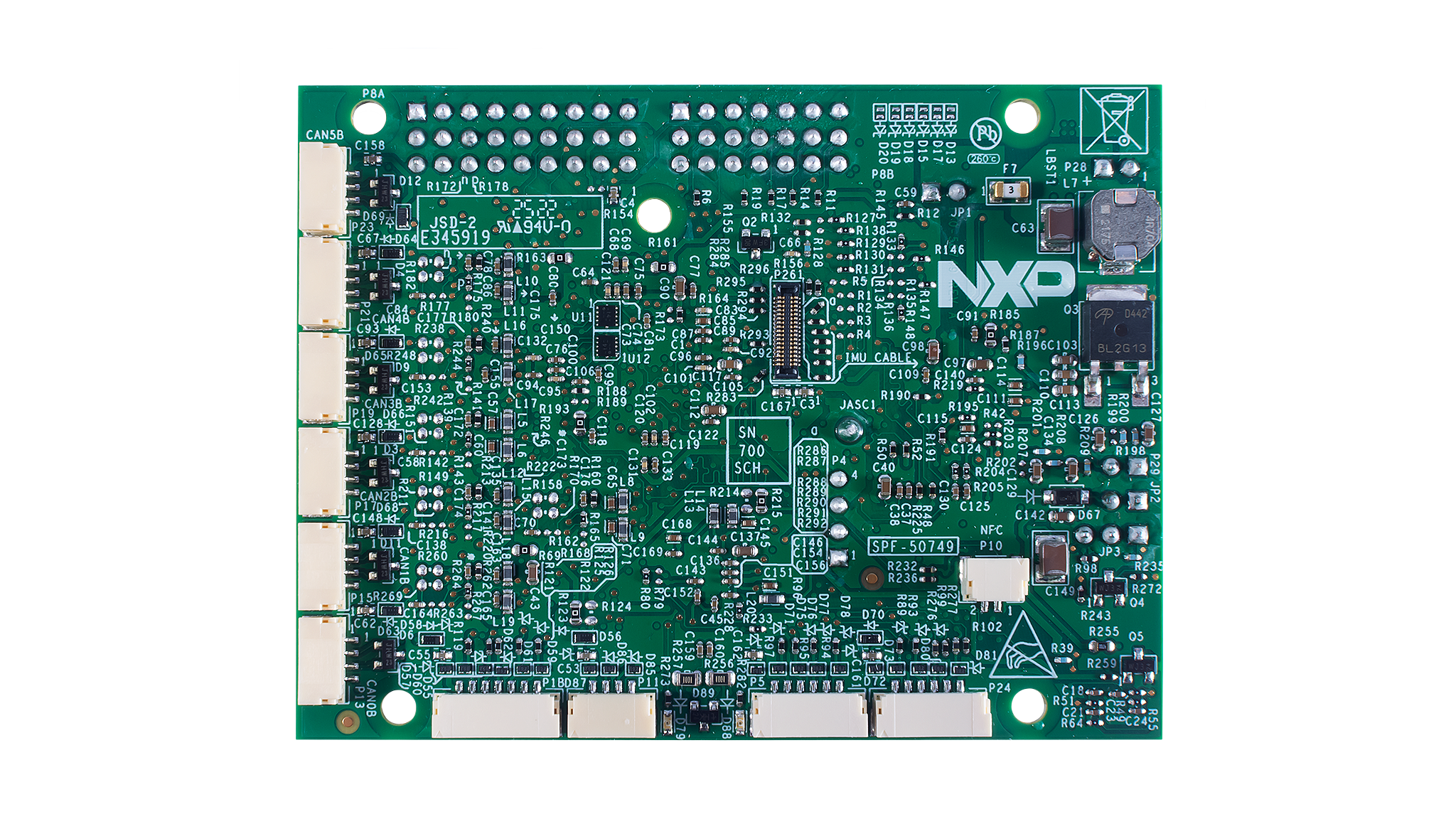

S32K344 Evaluation Board for Mobile Robotics with 100BASE-T1 and Six CANFD

Episode 15: Admiral John Richardson (Part 1) - IT Revolution

Conditional knockdown protocol for studying cellular communication using Drosophila melanogaster wing imaginal disc - ScienceDirect

Roelens Vacation Rentals: Villa Serendipity in Florida – Roelens Vacation Rentals

WRF-ARW User's Guide - MMM - UCAR

Error when training with multiple GPUs in TAO - TAO Toolkit - NVIDIA Developer Forums

Prospective bacterial and fungal sources of hyaluronic acid: A review - Computational and Structural Biotechnology Journal

Encoding trade-offs and design toolkits in quantum algorithms for discrete optimization: coloring, routing, scheduling, and other problems – Quantum

Remote Sensing, Free Full-Text

Quantum machine learning - Wikipedia

Digital Twin and Artificial Intelligence-Empowered Panoramic Video Streaming

The training process of Tao-Toolkit-API unet is always in Inf status - TAO Toolkit - NVIDIA Developer Forums

nvidia-tao · PyPI