Fine-tuning LLMs can help building custom, task specific and expert models. Read this blog to know methods, steps and process to perform fine tuning using RLHF

In discussions about why ChatGPT has captured our fascination, two common themes emerge:

1. Scale: Increasing data and computational resources.

2. User Experience (UX): Transitioning from prompt-based interactions to more natural chat interfaces.

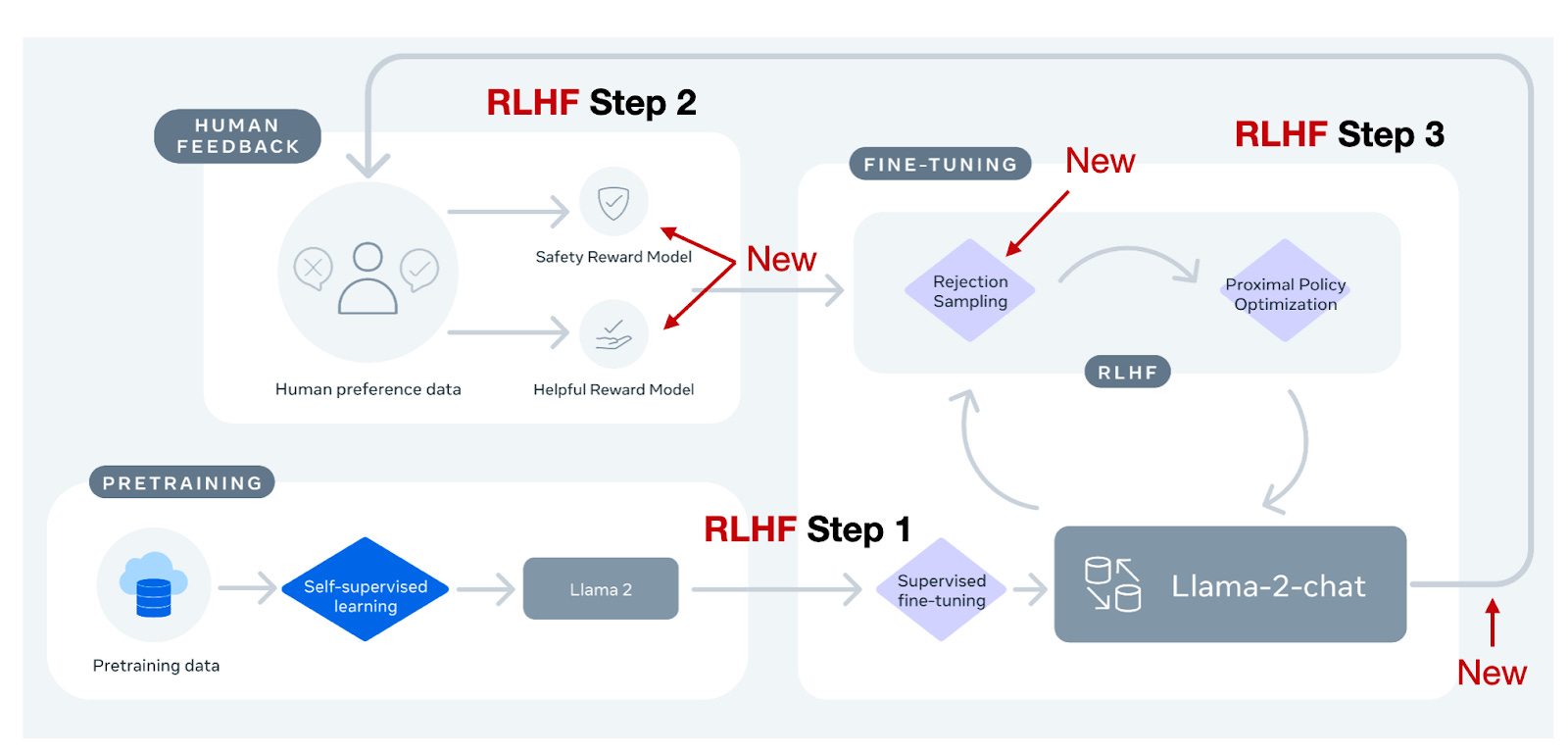

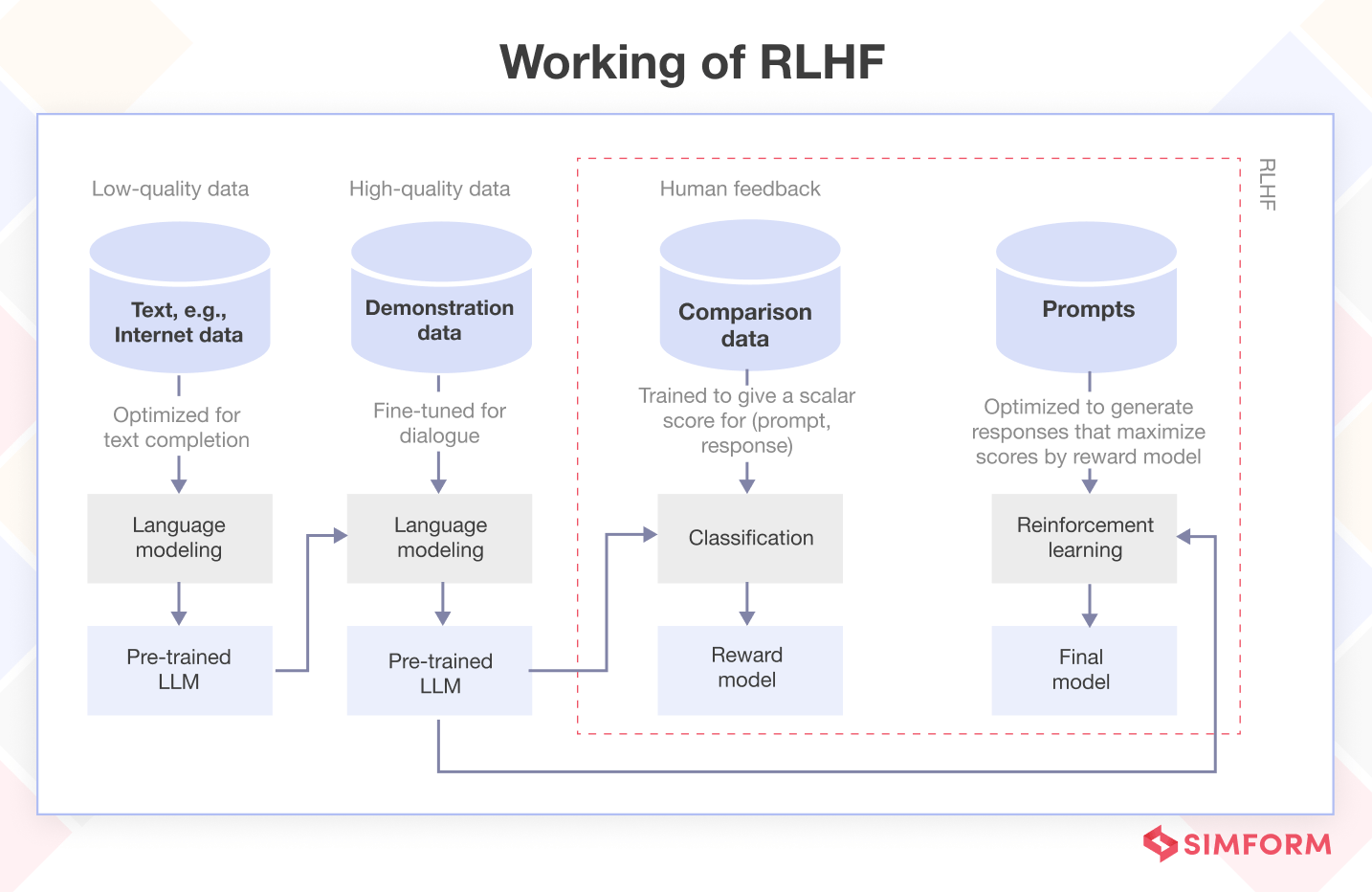

However, there's an aspect often overlooked – the remarkable technical innovation behind the success of models like ChatGPT. One particularly ingenious concept is Reinforcement Learning from Human Feedback (RLHF), which combines reinforcement learni

RLHF (Reinforcement Learning From Human Feedback): Overview + Tutorial

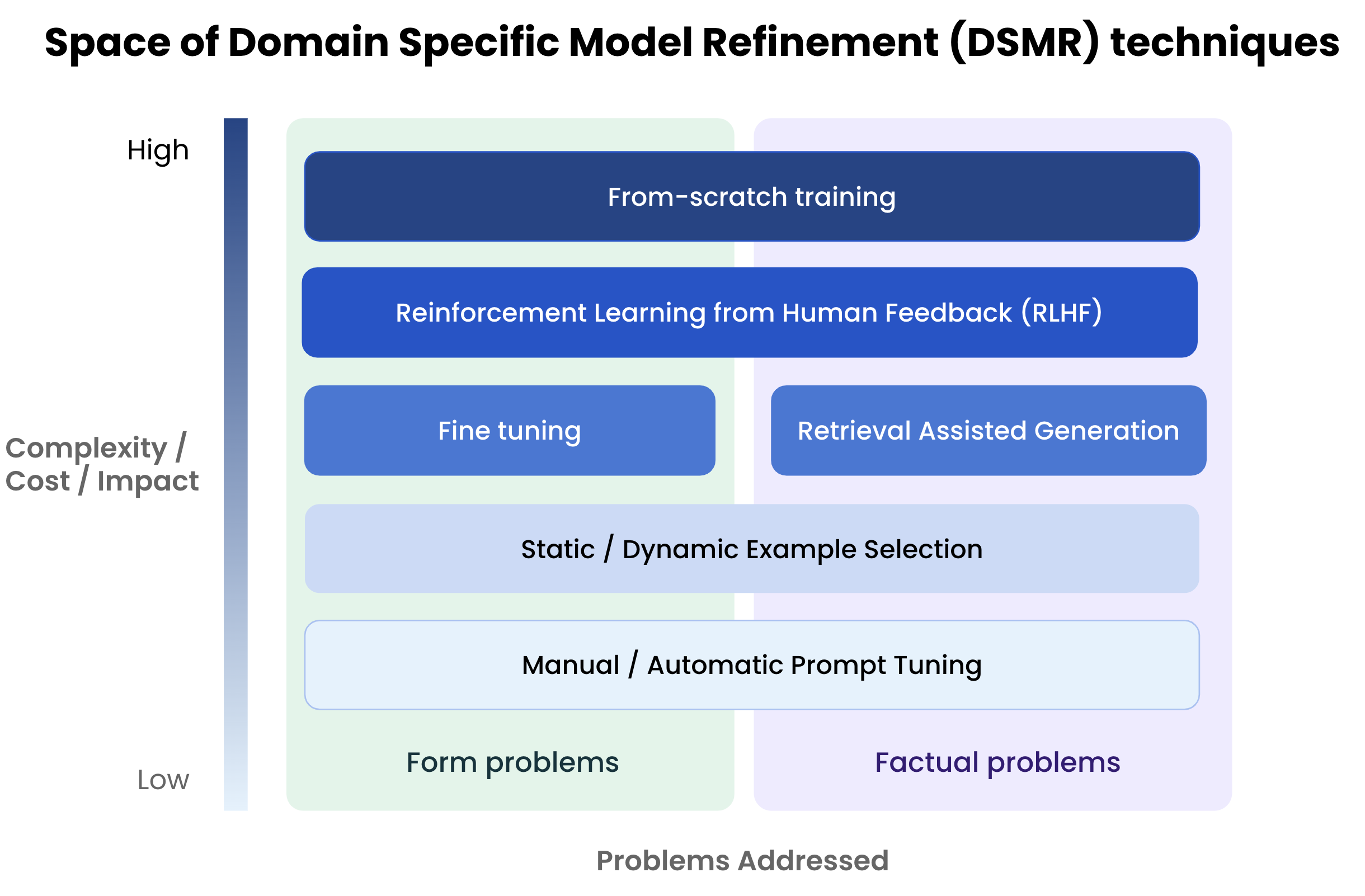

To fine-tune or not to fine-tune., by Michiel De Koninck

substackcdn.com/image/fetch/f_auto,q_auto:good,fl_

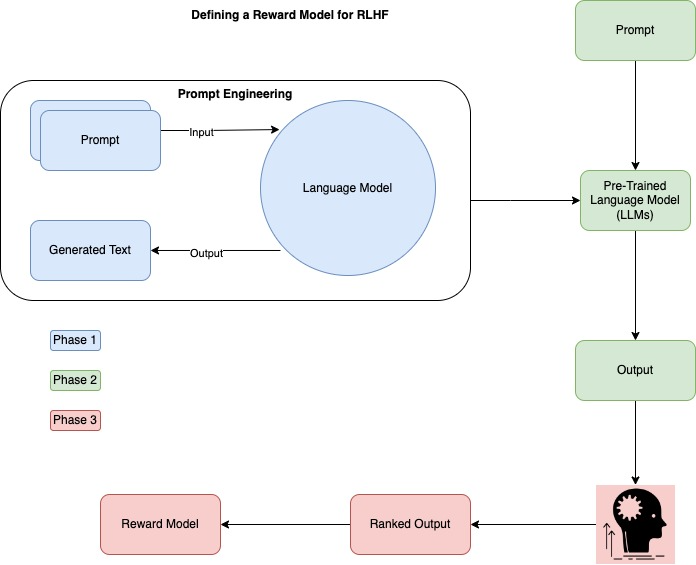

Building a Reward Model for Your LLM Using RLHF in Python, by Fareed Khan

Reinforcement Learning from Human Feedback (RLHF)

What is Reinforcement Learning from Human Feedback (RLHF)?

Akshit Mehra - Labellerr

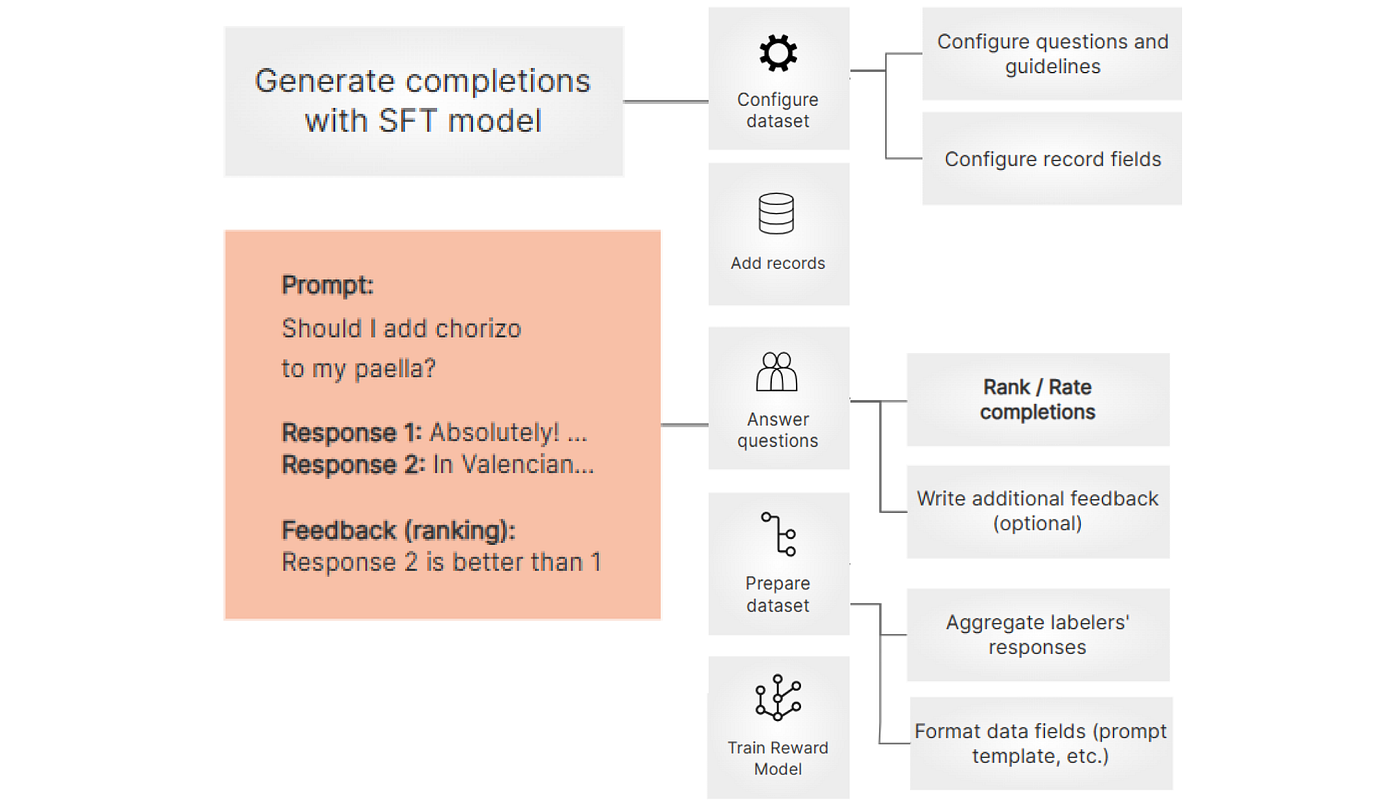

Data collection for LLMs - Argilla 1.14 documentation

Fine-tuning 20B LLMs with RLHF on a 24GB consumer GPU

Complete Guide On Fine-Tuning LLMs using RLHF